<short version> Google takes some user supplied data in a URL parameter which it expects to be a domain name, but does not validate it is a proper domain name or sanitise it. They then inject this value into the page inside an inline block of Javascript, controlling the next page you will visit (halfway through the login flow), which allows you to replace it with a path of your choosing. This path can be a logout URL which then uses a second user supplied parameter to control the ongoing redirect (cross-origin permitted). So essentially, because the user input is not treated properly an attacker can redirect someone who is halfway through logging in to a page of their choice. Google have now fixed it.</short version>

In July 2017, I reported an issue to Google that allows an attacker to inject a malicious link in to a standard Google ‘account chooser’ screen, such that they can hijack the login process. Google fails to properly authenticate a URL parameter, expected to be a domain name, before placing it server side into some inline Javascript on the page.

Specifically, it allows an attack to craft a URL to an authentic Google login page (where the user must pick from a list of their accounts) such that the destination for any one of those accounts is to a page the attacker controls.

Method

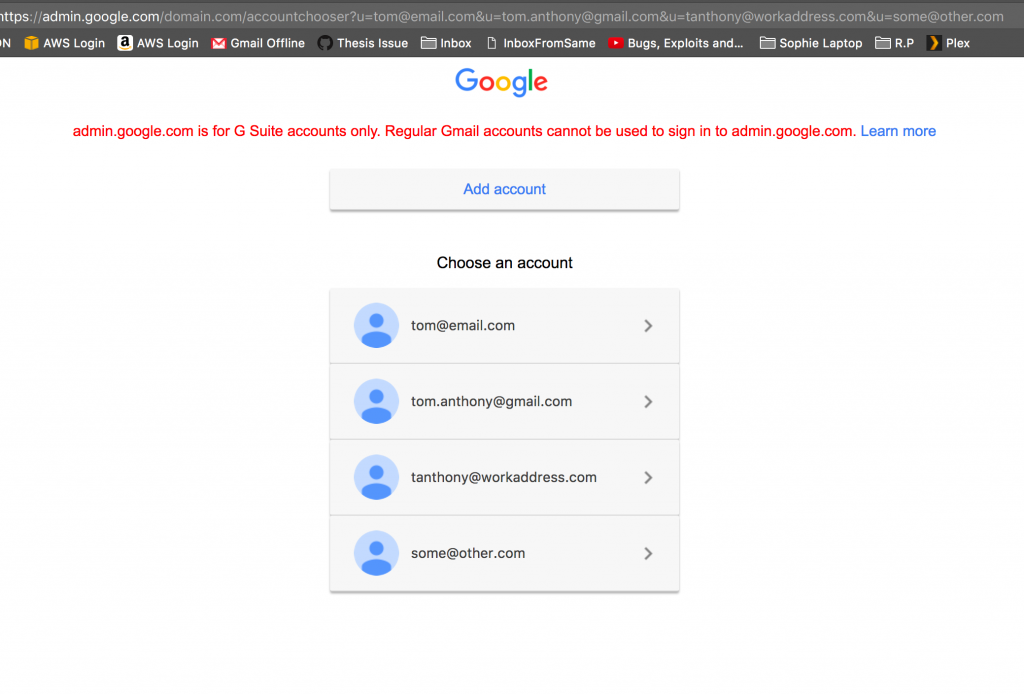

For people with multiple Google accounts, such as myself, we are quite accustomed to being presented with a list of our Google accounts from which we need to select one to log in to. One form of this Google page embeds (for reasons lost on me) the list of email addresses to show inside the URL:

(It is normal for there to be only placeholder icons, hack or not)

I first discovered this page well over a year ago, but other than manipulating the email addresses to say rude words I couldn’t find much malicious to do with it. I recently re-discovered the page, and thought I would take another look at what I could do with it.

I discovered the page uses the parameters not only to present the list of email addresses but also as a factor in controlling where the login flow takes the user to when they select an account, which involves a failure in server-side validation.

Specifically, I found that if I presented emails in a certain format the section after the @ symbol would be used as the path for the following page. Because it is a GSuite page, some server side code attempts to parse the domain from the email then use that to form the path for the following page. However, it does not validate the domain correctly, allowing an attacker to form aribtrary paths.

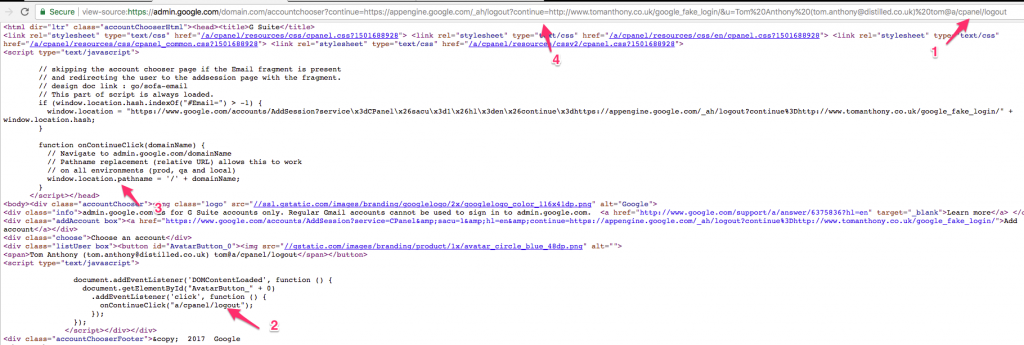

The improperly parsed ‘domain’ is then embedded inside some inline Javascript page that controls the login flow:

The screenshot shows the value in the URL [marked 1], being embedded into inline Javascript [2]. This value is expected to be a domain, but the server side code does little or no validation. For example, it does not ensure the presence of an TLD extension, and it allows forward slashes.

However, adding such a path did disfigure the listings on the page, such that it was obvious that something was amiss. To counteract this, I simply abused the fact that CSS was used to crop overly long email addresses, and given that invalid email addresses are allowed through here, I simply dressed it up with the full name of the user before (as is standard on these pages most of the time anyway).

To get URL parameters to pass through, I simply put them in the URL before the list of u parameters specifying emails (marked [4] in the screenshot) and these parameters would then be added to the destination path. You can see marked [3] in the screenshot, that this worked because the window.location.pathname is used, which means the query string remains intact.

Now I was able to use abuse existing open redirect via a logout redirect page (so doesn’t require users to be logged in already to work) via another Google open redirect to forward the user to any page of my choosing. There are many such open redirectors on Google.com, so many options to abuse this.

Here is a demo:

The redirect I am using shows a redirect message, but I did later site step that. You could also redirect to a more misleading domain, of course. The result is that you start on an authentic Google account page, and when you select an account that you expect takes you to a password entry page, you arrive at one. However, this page is actually a malicious page being controlled by the attacker, who is now going to steal your login details. They can then redirect you back to an official Google page to cover their tracks.

I reported this to Google who said they already had a bug filed against it, but after some back and forth it seems that was something else. Unfortunately, Google deemed it “does not meet the bar for a financial reward”.

Disclosure Timeline

17th July 2017 – I reported this to the security team via their form.

17th July 2017 – I heard back it was triaged and awaiting attention.

20th July 2017 – I followed up to supply some more information. I was concerned it would be flagged purely as a phishing issue, or open redirect. I also thought they may think it was just the issue that an attacker can control the list of accounts and miss the fact that the URL parsing is broken such that malicious links can be injected.

20th July 2017 – The team came back to me and told me they were already aware of this issue, so it was not eligible for a bug bounty.

21st July 2017 – I shared a draft of this write up with the Google team.

3rd August 2017 – Google came back letting me know they are working on the issue, but in doing so also revealed that (due to my poor explanation!) they had mis-understood the primary issue I was reporting.

4th+7th August 2017 – I replied giving some more details on the exact issue.

11th August 2017 – I followed up.

17th August 2017 – Google replied saying it was actually different, and saying I should follow up in 2-3 weeks.

14th September 2017 – I followed up.

15th September 2017 – Google security team replied saying they hadn’t heard back from the team responsible, and said they would follow up.

2nd October 2017 – I followed up.

12th October 2017 – Google team said still no status update.

3rd November 2017 – I followed up.

20th November 2017 – I followed up.

15th December 2017 – Google team said still no status update.

25th January 2018 – I followed up.

13th March 2018 – Google team said still no status update.

14th April 2018 – I followed up, now 9 months since report.

15th May 2018 – Google let me know a fix is a few days out.

8th June 2018 – Google confirm I can publish.

2nd August 2018 – Google confirmed it “does not meet the bar for a financial reward”.

Similar to the other bug that I recently reported, this is quite a specific attack as it needs to target a specific user, but it is potentially high impact. Furthermore, it may be that the missing validation on the parameter allows for other nefarious uses, but I didn’t dig deeper once I identified the issue.

As usual, a big thanks to the Google team! 🙂