In the last couple of days Google announced that they were going to start executing javascript on most pages they visit and thus rendering pages far more akin to how our browsers do it. It was inevitable they’d need to do this, so it is a welcome update.

Then today, they announced an updated tool in Webmaster Tools that extends the previous “Fetch as Googlebot” feature with an additional option which enables this new javascript capabilities and returns you a screenshot of the rendered page. Very cool!

In my experimentation with this tool I used to confirm that this new headless browser Googlebot seems to accept and store cookies, and send them back to your server too (as well as referrer headers).

The Setup

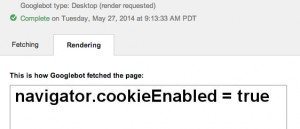

I started by creating a simple javascript script to check for the navigator.cookieEnabled JS property, which I then visited in the new WMTs tool:

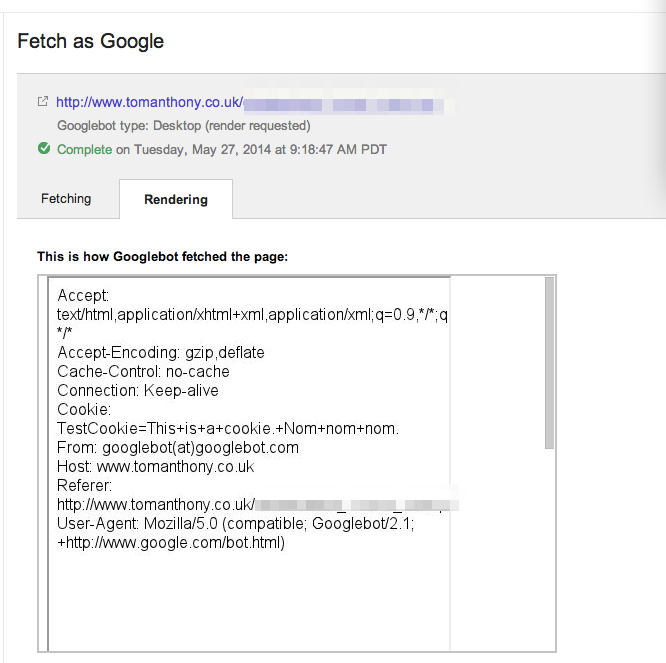

So now the trick was to check whether Google would really store and return this cookie. I ended up settling on a simple setup with a PHP script that set a cookie and output an page containing an iframe, the document in that iframe was a second script which output the HTTP headers Google sent with the request:

You can see that sure enough Googlebot sends back a Cookie header with the value I had set in the ‘outer’ script. It sends a Referrer header too.

What does this mean?!

So far it is too early to tell. Google doesn’t keep the cookies between runs of the tool, so it really depends how they treat things when actively crawling pages. If crawling multiple pages like a single run of the tool then we should see cookie affected results appearing in the index, but it might be that Google crawls each page separately in a different ‘session’ and then this won’t change very much.

6 responses to “Googlebot now accepting Cookies”

I notice same yesterday. So far only bot that use this is for “Fetch and render”.

I don’t think that this will affect main bot because they are cookie-less and referrer-less just as other bots on the internet.

It appears that the regular googlebot (not Fetch tool) is now sending referer headers when it requests page resources (have caught it sending them on requests for CSS and JS files).

Thanks for the info, bro.

I usually don’t check or use webmaster tools (I need to!) so would be hard for me to notice this change.

Although I don’t use it, this info is gold for me.

(sorry, stop to look for the name of your site… lol…)

Thanks, Tom, really helpfull!

[…] ÄlovÄ›ka, což souvisà s lepÅ¡Ãm zpracovávánÃm JavaScriptu a možná i cookies, je zpravidla lepÅ¡Ã poÄÃtat […]

I was not aware about this. It is good for many that Googlebot has started accepting cookies. Now crawling can be tracked easily.

It appears that the regular googlebot (not Fetch tool) is now sending referer headers when it requests page resources (have caught it sending them on requests for CSS and JS files).